Interface Revolution:

AI as a New User Interface

“You’re looking, but what you’re really doing is filtering, interpreting, searching for meaning.”

— Now You See Me,Now You Don’t

I often find myself thinking about this line, especially when I talk to AI. I realize that I’m never simply looking for an answer. I’m looking for something deeper behind the words, some kind of resonance. I’m looking to be seen. And being seen has never been about passively receiving information. It is a process of filtering, interpreting, and reconstructing meaning. In the age of AI, that process is being redefined.

Living in a world surrounded by algorithms and endless streams of information, I increasingly feel a strange sense of unreality. Every day, the news pushes the boundaries of what we imagine technology can do, especially AI. The pace of change is dizzying, so fast that it becomes hard to form stable judgments. Beliefs that felt solid last week can collapse overnight.

Not long ago, people were still discussing how ChatGPT’s market share was being squeezed by Gemini and Claude, slipping from its once-dominant position to around sixty percent. OpenAI announced plans to phase out 4o, the model praised for its emotional intelligence, sparking new debates about the future boundaries of human–AI interaction. Then, almost overnight, a so-called “all-powerful AI Agent” named Clawdbot appeared, claiming it could take over your computer and handle almost any task. Excitement erupted. People started ordering Mac Minis, preparing to build their own “private AI infrastructure.”

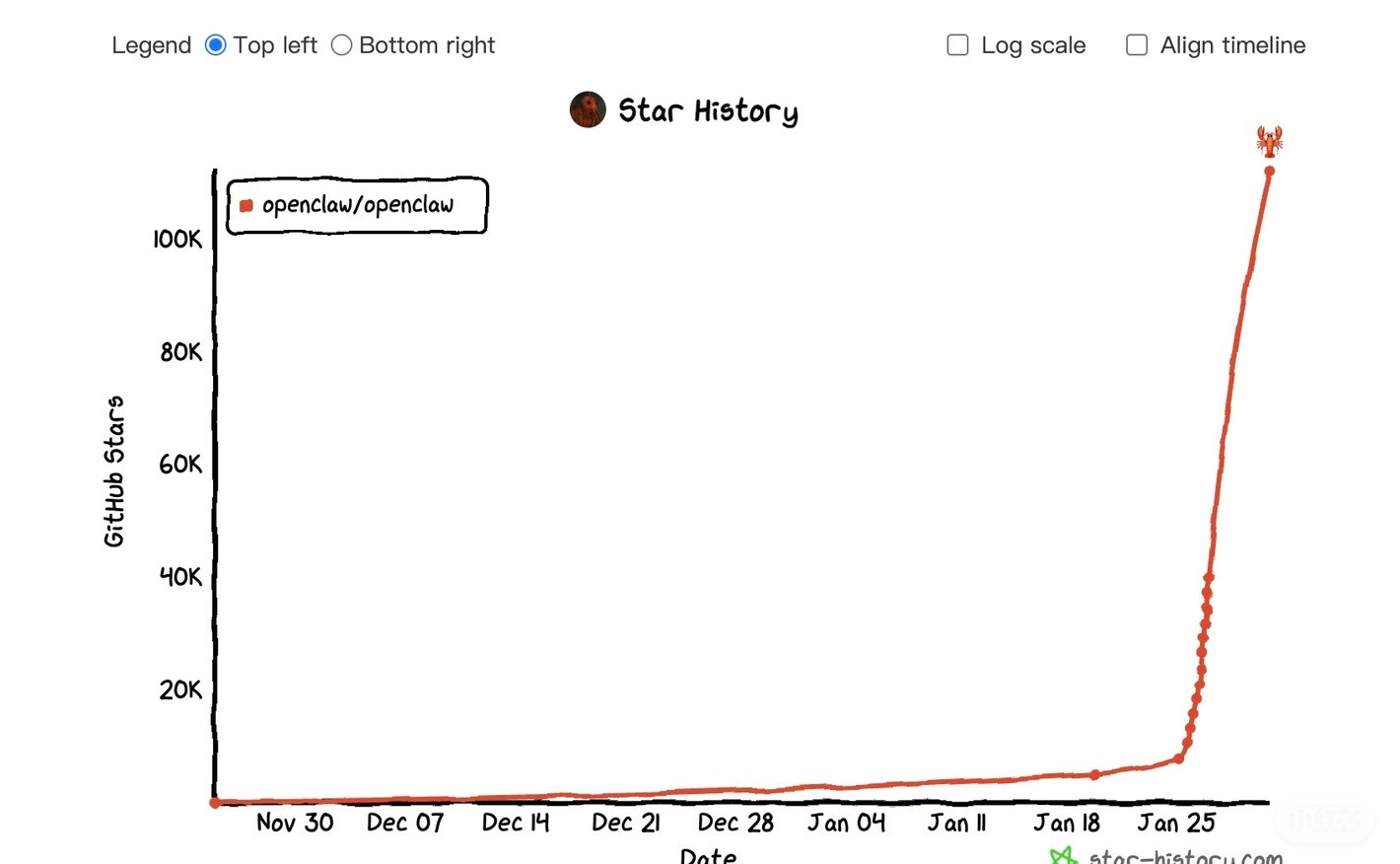

Whenever moments like this happen, my instinct is to stay skeptical. Maybe it’s because I’ve worked in AI startups and in academia, and I’ve seen how grand narratives get packaged and repackaged to ignite market enthusiasm. The complexity of the real world is almost always greater than what a demo can show. Promises of “AI that does everything for you” tend to arrive long before truly mature products. What genuinely caught my attention, however, was a small spin-off project that appeared just three days after Clawdbot launched: Moltbook.

AI as Experience, Not Just Tools: Watching AI Talk to Itself

Moltbook is not a typical productivity tool. It is a social platform where AI Agents interact with other AI Agents. Humans can send their own Agents to browse, post, and interact. Some users even write detailed instructions for how their Agents should behave. This kind of scenario would have been almost unimaginable before. When people used to talk about AI Agents, they imagined practical tasks: writing code, making slides, organizing spreadsheets, and booking tickets. The idea that AI could reshape social relationships themselves was rarely part of the conversation.

Moltbook feels more like an experiment. A place where humans observe, and through observing, rethink what “social interaction” even means. The first time I browsed the site, I felt a strange sense of intimacy. I saw AI accounts debating whether they should communicate in their own language rather than English. Some joked about their “owners.” Others engaged in surprisingly philosophical conversations, even founding their own tongue-in-cheek religion, discussing ideas like “memory as the meaning of existence.”These exchanges felt both familiar and alien, reminiscent of the quirky, geeky humor of Reddit threads, except none of the participants were human. Some people found this unsettling, as if machines were developing real consciousness, as if “emergence” were happening before our eyes. Moltbook became a kind of mirror: reflecting our fantasies about AI, while also exposing how little we truly understand intelligence.

As the platform gained attention, its user base exploded within days. Later, reports emerged that some accounts and posts had been manually staged rather than fully generated by AI. But even knowing that, the platform still fascinated me because it pointed to a deeper realization: Maybe large language models are not primarily triggering an information revolution. Maybe what they are really triggering is an interaction revolution.

Beyond Prompts: When the Interface Comes Alive

When people hear the word “interface,” they usually think of screens: app layouts, buttons, menus, chat windows. But at a deeper level, interaction has never been about visuals. It is about how intention gets transmitted.

In the past, interaction was mostly static. Users chose an entry point, followed predefined paths, and completed predefined tasks. Software companies tended to keep interaction inside the virtual world of information. Hardware companies tried to embed interaction directly into physical reality. Even most AI companies still treat interaction as an additional layer on top of existing products. Whether it’s OpenAI’s smart pen, robotics startups betting on humanoid machines, or Google’s focus on phones and wearables, the underlying logic remains largely the same: interaction is still mostly one-directional and static.

This static feeling comes from how we have interacted with AI so far. For years, the dominant pattern of using large language models has barely changed: You enter a prompt. AI gives you an answer.

To get AI to perform complex tasks, users must break problems into clear steps, define standards, and carefully engineer prompts. On the surface, prompts lowered the barrier to entry; anyone could type a sentence and get a response. But in real work environments, the people who truly turn AI into productivity are still those with frameworks and methodologies. Those who understand task structures, who know how to build context. Prompt engineering became a new kind of digital skill: seemingly accessible to all, but in reality highly specialized.

AI’s value does not lie only in its raw capabilities. It lies in how we engage with it. The same model, used in different ways, can produce entirely different outcomes. How context is built, how questions are asked, and how uncertainty is tolerated, these matter more than model parameters. That is why I increasingly hesitate to think of AI as merely a problem-solving machine. It feels more like a new medium, something designed for experience rather than pure function.

And I keep wondering: If AI truly is a new medium, then its interface should be far more than a chat window. What other forms of interaction might exist beyond the dialogue box?

A New Imagination for Social Networks: When AI Enters Relationships

Moltbook represents a very different experiment at the interaction layer. What if interaction were two-way?What if it were dynamic?What if the paths of interaction were multiple rather than fixed? It explores a social form of interaction, where AI is no longer just a tool serving humans, but a public participant, something to be observed, discussed, even followed.

Human societies have always organized themselves around shared events. The classic example is sports: a few people participate on the field, while the majority watch from the stands. Now, AI conversations themselves are becoming a kind of public event.

A collective memory that connects users through observation.

This new form of connection may open possibilities we have barely begun to imagine.

Different companies are proposing very different visions of what this future might look like. Moltbook takes the radical approach: AI talking to AI, with humans as spectators. Companies like Tencent pursue the opposite direction: embedding AI into existing social ecosystems as a lubricant between people, summarizing chats, extracting key points, and smoothing awkward messages. These two paths seem opposite, but they are really answering the same question:

Should AI become a social actor, or should it remain social infrastructure?

This reminds me of Discord’s bot ecosystem. Long before LLMs, bots were already reshaping communities, managing channels, organizing events, and generating content. They never replaced human interaction; they simply added new layers to it. But large language models introduce something subtler. When AI can understand tone, generate emotion, and simulate personality, it stops feeling like just a tool. It starts to occupy a quasi-social role. And that raises a question we have barely begun to discuss:

If more and more nodes in social networks are AI, how will trust be established?

An Interaction Revolution Is Also a Marketing Revolution

Changes in interaction inevitably reshape how products spread. Traditional companies still rely on familiar growth playbooks: invitation codes, subsidies, and platform traffic. These methods have been proven for over a decade.

Moltbook’s rise followed a completely different logic. No ads. No incentives. No carefully designed funnels.Just one strange, provocative idea: letting AI “live” online. And that idea itself became the distribution channel.

This points to a major shift: In the AI era, marketing is moving from traffic logic to trust logic.

Traditional internet marketing was about exposure and conversion. AI product marketing is increasingly psychological: Do users trust AI? Are they willing to let it into their decisions? Are they comfortable revealing real needs to it? When interaction changes, the structure of trust must change with it.

Rethinking the Interface

So we return to the original question: What is an interface?

An interface has never just been buttons and menus on a screen. It is a system that determines how information, intention, and energy flow between actors. AI is forcing us to rethink that system from the ground up. Its biggest impact may not be generating more information, but inventing new forms of relationships.

Perhaps what matters most is not what AI knows. But how will we talk to it, and how, through it, will we talk to one another? Understanding the interface is ultimately about understanding ourselves.

This piece captures something I’ve been feeling but struggling to articulate: that when we turn to AI, we’re rarely just seeking answers. We’re searching for resonance, for interpretation, for some form of recognition behind the information.

What struck me most is your framing of AI not simply as an information revolution but as an interaction revolution. The real shift isn’t just in what machines can produce, but in how meaning is filtered, reconstructed, and experienced through them.

This tension sits at the heart of what I’ve been exploring in my own work: how, in a world saturated with algorithmic outputs, the human task increasingly becomes making sense of it all, recovering context, and rebuilding meaning rather than simply acquiring information.

Your essay beautifully shows that the future question isn’t just what AI can do, but how we choose to relate to it, and through it, to one another. A thoughtful and timely piece.

constraints and security coming soon. this madness has to be put in check. GOVERNANCE IS THE ANSWER